This blog post comes from Lele Ma, a Ph.D. student at William and Mary. He was recently a Google Summer of Code Intern working on the Honeynet Project.

Introduction

This post introduces the project I worked on with Honeynet Project at Google Summer of Code this year. The project of TinyVMI is to port a library (LibVMI) into a tiny operating system (Mini-OS). After porting, LibVMI will have all its functionalities running inside a tiny virtual machine, which has a much smaller size as well as higher performance compared to the same library running on a Linux OS.

Mini-OS & Unikernels

Mini-OS is a tiny operating system demo distributed with the source of Xen Project Hypervisor (abbreviated as Xen below). It has been a basis for the development of several unikernels, such as ClickOS and Rump kernels. Unikernels can be viewed as a minimized operating system with following features:

- No ring0/ring3, or kernel/user mode separation. Traditional operating systems, like Linux, separate programs into kernel mode and user mode to protect malicious users (applications) from accessing kernel memory. However, in unikernels like Mini-OS, there is only one mode, ring0, or kernel mode. This eliminates the burden of maintaining the context-switching between two modes. The code size of the kernel and runtime overhead are both reduced.

- A minimal set of libraries. Instead of shipping with a lot of system/application libraries to provide a general purpose computing environment, a unikernel aims to be configured with a minimal set of libraries that are only necessary for the application that runs in it, thus also called a library operating system. For example, in Mini-OS, users can configure with libc to write applications in C language.

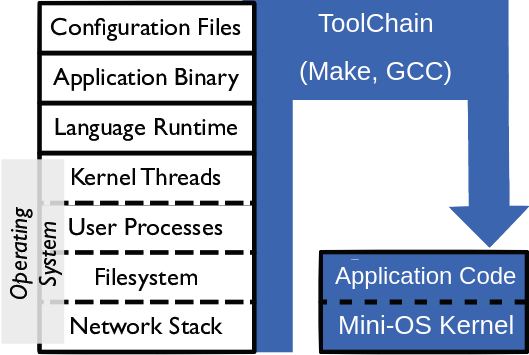

Fig.1 General Purpose OS vs. Mini-OS Unikernel

As shown in Fig.1, a unikernel is much smaller in size and eliminates all unnecessary tools and libraries, and even file systems from the OS, keeping only the application code and a tiny OS kernel. Unikernels can be more efficient than traditional operating systems, especially for cloud platforms where each specialized application is usually managed in a standalone VM. Unikernels are supposed to be the next generation of cloud platforms because they can achieve efficiency in several aspects. These include but are not limited to:

- Less memory footprint. A unikernel requires significantly less memory than a traditional operating system. For example, a Mini-OS VM with LibVMI application only requires 64MB of main memory. However, a Linux VM would occupy 4GB of main memory to get average performance for a 64-bit Linux. The reduced memory footprints would allow a single physical machine to host more VMs and reduce the average cost per service.

- Faster booting. Since the memory footprint is small and has no redundant libraries or kernel modules, a tiny OS would require significantly less time to boot than a traditional OS. Booting a tinyOS is just like starting the application itself.

- No kernel mode switching. OS kernels and applications are in the same chunk of the memory region. CPU context switches caused by system calls are eliminated in unikernels. Therefore, the runtime performance of the unikernel can be much better than a traditional OS.

- More secure. Each unikernel’s VM runs only one application. Isolation between applications is enforced by the hypervisor, instead of a shared OS kernel. Compared to process isolation or container isolation in Linux, the unikernel is more secure from the lower level isolation.

- Easy deployment; easy to use. Unikernel applications are built into a single binary to run directly as a VM image, which simplifies the deployment of the service. Unikernel applications are designed to be single click and run. All functionalities are customized at building time. Once deployed, the binary package requires no human modifications except the whole binary package being replaced.

In brief, Mini-OS is a tiny OS originated from the Xen Project hypervisor. Like other unikernels, Mini-OS provides higher performance and a more secure computing environment than a traditional operating system on the cloud.

Why port LibVMI to MiniOS

LibVMI is a secure critical library that could be used to view a target VM’s raw memory from another guest VM, thus gaining a whole view of almost all the activities on the target VM.

Traditionally, LibVMI runs in Dom0 on the hypervisor. However, Dom0 is already very big even without LibVMI in it. I got the idea of separating LibVMI from Dom0 from the following observations:

- Dom0 is a general purpose OS hosting many daily use applications, such as administrator tools. However, LibVMI is a special purpose library and usually not for daily use. Furthermore, there are almost no direct communications between LibVMI and other applications. Thus it is not necessary to install LibVMI in Dom0.

- Security risk. Dom0 is a critical domain for the hypervisor platform. Introducing a new code base to the kernel would also introduce new security risks. Other applications on Dom0 could leverage kernel vulnerabilities to compromise LibVMI, and vice versa, a bug in LibVMI could crash other applications or even the entire Dom0 kernel.

- Performance overhead. As introduced above, a general purpose OS is large and inefficient to run a special purpose application. CPU mode switching, large memory footprints, and process scheduling all introduce overheads for Dom0.

Therefore, we propose to port LibVMI to the tiny Mini-OS, named TinyVMI, to explore whether we can achieve the above benefits.

Challenges

First, the hypervisor isolates each guest VM from reading other VM’s memory pages. A guest VM should get enough permission before it can be used to introspect other VM’s memory. Second, LibVMI depends on several libraries that are not supported in the original Mini-OS. Therefore, in this project, we want solutions to overcome these two challenges.

Permissions in accessing other VM’s memory

To introspect a VM’s memory from another guest VM, the first thing is to get permissions from the hypervisor. By default, memory pages of each VM are strictly isolated from each other – they are not allowed to access the memory pages of other VMs. Although the hypervisor allows programmers to share memory pages between two VMs by grant tables, it requires the target VM to explicitly offer the page for sharing. Since the entire target VM is not trusted and no changes should be made to the target VM, LibVMI uses foreign memory mapping hypercalls to remap memory pages from the target VM to its own memory space. The permission of mapping a foreign page (target VM’s page) to its own address space for a guest VM (or Dom0) is controlled by the Xen Security Module (XSM), which will be introduced below.

Furthermore, Xen event channels allow a guest VM to monitor its memory status in real time with the help of hardware interruption. A ring buffer is shared between the hypervisor and the guest kernel to transfer event information. To access the ring buffer, XSM permission should also be granted.

Xen Security Module (XSM) uses FLASK policies as in SELinux, to enforce Mandatory Access Control(MAC) between different domains. Each permission (by default) is denied unless explicitly being allowed in the policy. Permissions are granted according to multiple categories the guest domain belongs to, such as the types, roles, users, and attributes of the guest domain (more).

The category of a VM is labeled in the configuration file we use to create it via xl create <config_file>. For example:

will label the VM as type domU_t1, under the role of system_r, and user of system_u, user system_u. Type is the lowest level of the category. Multiple types can be defined as one role multiple roles as one user.

Permissions are granted based on the types of a VM. For example, the permission of map_read allows a domain to map other domain’s memory with read-only permission. The policy:

will allow a VM with type domU_t1 to read the memory of another VM with type domU_t2.

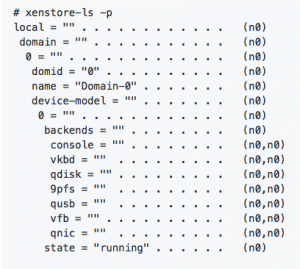

In addition to the permissions granted from XSM, we also need the permission to read information from Xenstore, which is used to get metadata of the target VM, such as getting the Domain ID from the domain’s string name. Xenstore permission can be read via the command xenstore-ls -p:

The meaning of permission could be found from the manual. Command xenstore-chmod can be used to grant reading permissions to certain VMs. For example, to enable VM with ID 8 to read Xenstore directory /local/domain, you can run:

Build New Libraries into Mini-OS

The next challenge is building new libraries into Mini-OS. Mini-OS is an exemplary minimal operating system. To keep the kernel small, there are only a few libraries that can be built in it: newlib for C language library, a Xen-related library such as libxc to communicate with the hypervisor, and lwip for basic networking.

To port LibVMI to Mini-OS, 2 more libraries are needed. These include one JSON library to parse Rekall profiles, libjson-c, and one library with utility data structures, GLib.

In theory, most libraries written in C language can be built into Mini-OS with the help of newlib, such as libjson-c. This post introduces how to build new libraries. However, some of them might need to be manually customized for MiniOS by eliminating the unsupported portions, such as GLib.

Furthermore, security applications written in C++ programs can also be ported into Mini-OS. For example, DRAKVUF is a binary analysis system built on top of LibVMI and Xen. A portion of its code is in C++ language. To build these codes in Mini-OS, we need to cross-compile C++ standard libraries into the tiny kernel.

Project Status & Results

Functions added to Mini-OS

- Support of LibVMI functions to introspect Linux and Windows guest on x86 architecture. Both memory access and event support are implemented. ARM architecture and other OS kernels (such as FreeBSD) have not been explored yet.

- A customized GLib, a statically compiled libjson-c is cross-compiled into Mini-OS.

- C++ language support. C++ standard library from GCC was cross-compiled into static libraries, such as libgcc, libstdc++, etc. Now in Mini-OS, we can program with C++ ! Not only C. Detailed steps can be found in this post.

- A github site of Documentations and a Blog are maintained to document the manuals of how to build and run TinyVMI, as well as track the progress of each proceeded step during the summer.

Performance Analysis

In order to evaluate the TinyVMI system, we conduct a simple analysis and experiment to show its efficiency. We build two VM domains with LibVMI on the same hypervisor for comparison. One guest VM running Mini-OS with LibVMI and another VM, Dom0, running Linux (Ubuntu 16.04) with LibVMI. The target VM being introspected is a 64-bit Linux (Ubuntu 16.04). Results are shown in Fig.2 and Fig.3.

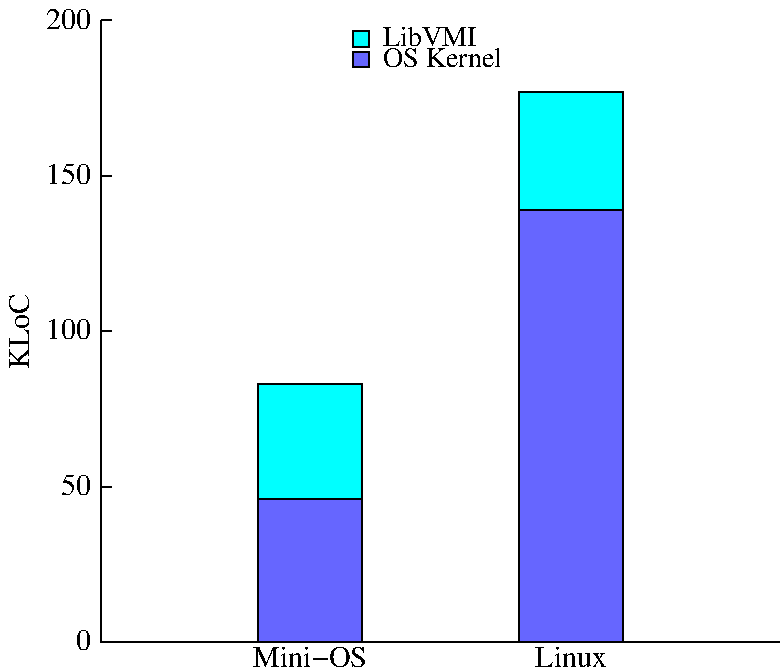

Fig.2 Code Size of LibVMI and Different Kernels

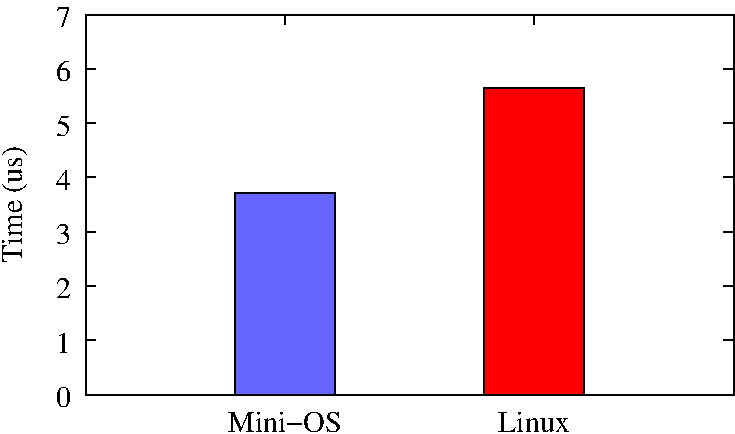

Fig.3 Time in Walking Through Page Table

Fig.2 shows the overall code size of the OS with LibVMI in it. LibVMI with MiniOS totaled 83K Lines of Code (LoC) while LibVMI with Linux kernel had 177K LoC, reducing more than 50% percent of code size. Note that the LoC of Linux kernel does not include any driver codes, which only reflects the possible minimal size of a Linux kernel. If drivers included, it could be 15M+ LoC for Linux system.

Fig.3 shows the time elapsed of reading one page by walking through the 4 levels of the page table while introspecting a 64-bit Linux guest VM. The time is an average of reading 500 consecutive pages. LibVMI in Mini-OS took 3.7 microseconds, while LibVMI in Linux took 5.7 microseconds, saving more than 30% of the time.

Conclusion

To briefly conclude the project, we have successfully ported the core functionalities of LibVMI into the tiny OS on Xen, Mini-OS. By customizing the XSM policy specifications and Xenstore permissions, a guest VM has been granted with permissions to introspect another guest VM via VMI technique. By customizing and cross compiling static libraries into Mini-OS, we have built LibVMI in a tiny OS, enabling a tiny VM to introspect both Linux and Windows guest VMs. Evaluations show the code size is reduced by more than 50% and performance is improved by more than 30% compared to VMI operations on Dom0 on the hypervisor.

Future Directions

- DRAKVUF integration. After the last week of GSoC, C++ language support was added to TinyVMI under the help of this post from Notes to self. The next step would be cross-compiling the DRAKVUF system into TinyVMI. This will enable more applications to take full advantage of LibVMI interfaces already provided in the Mini-OS.

- Dom0 Introspection. We all know Dom0 is huge. Although much work has been done to disaggregate it, it is still huge. TinyVMI itself has a small trusted computing base (TCB). However, we still need to trust Dom0 to enforce the XSM policies. This enlarges the TCB of the system significantly. Since we have to trust Dom0, it will be useless to monitor the main memory of Dom0 from TinyVMI. A further step to disaggregate Dom0 would be separate the XSM module management interface into another sub domain, or just to the same domain as TinyVMI. Taking this apart would make it possible to eliminate Dom0 from the trusted computing base, and allow TinyVMI to monitor Dom0 via VMI techniques.

Acknowledgment

Thanks to my mentors, Steven Maresca and Tamas K Lengyel, for accepting me as a student in GSoC this year. This is my first time at GSoC and this exciting project could not have been achieved without your prompt, helpful instructions and graceful patience. Thanks to Zibby Keaton for the grammar checkings on this post. Thanks to all Google Summer of Code committees for providing such a great opportunity for us to explore the world of open source!